DarkGPT Explained: What It Is, How It Works, Risks, and Reality (2026 Guide)

Share

Artificial intelligence is no longer just a productivity tool. It has become part of how cyberattacks are planned, tested, and executed. Over the past year, one term has started appearing more often in cybersecurity discussions: DarkGPT. At first glance, DarkGPT sounds like a hidden version of AI designed for hacking. But when you look closer, the picture is less clear. There is no verified platform, no official release, and no single system you can point to. Still, the conversations around DarkGPT continue to grow. That gap between what is claimed and what is proven is exactly where the risk sits. The concern is not just whether DarkGPT exists as a tool. The concern is how AI, when used without restrictions, changes the way attacks are carried out.

TL;DR

DarkGPT is not a clearly defined or officially verified AI tool. It is a term used to describe unrestricted or modified AI systems often linked to cybersecurity risks. The real concern is not the tool itself, but how AI is being used to scale phishing, credential theft, and identity-based cyberattacks in ways that are harder to detect and easier to execute.

Key Takeaways

- DarkGPT is not a confirmed standalone platform

- It represents the idea of unrestricted AI use in cybersecurity

- The real risk is AI-driven phishing and credential theft

- Identity-based cyberattacks are increasing because of AI assistance

- Organizations need to shift from system security to identity-focused defense

What Is DarkGPT

DarkGPT is often described as an AI system that operates without the safety controls found in mainstream platforms. Unlike regulated tools, it is associated with use cases that involve reconnaissance, phishing content generation, and analysis of leaked data. However, there is no official version of DarkGPT available through trusted or verified platforms. The term is used loosely. It may refer to modified AI models, experimental setups, or exaggerated claims shared across online communities. This matters because it changes how we should approach the topic. Instead of asking whether DarkGPT exists as a product, it is more useful to ask what kind of behavior it represents. That behavior is already visible in how AI is being used in cyberattacks.

Is DarkGPT Real or Just a Concept

This is one of the most common questions, and the answer requires careful thinking. In some cases, DarkGPT refers to modified versions of AI models where restrictions have been removed or bypassed. These are not officially supported and are often shared in limited circles.

In other cases, it refers to tools or scripts built on top of existing AI systems. These may not be advanced systems, but they are presented as something more powerful. There is also a third layer where the term is used to create attention. Not everything labeled as DarkGPT is real or functional. This uncertainty is important. It means the focus should not be on finding the tool, but on understanding the impact of AI when used without safeguards.

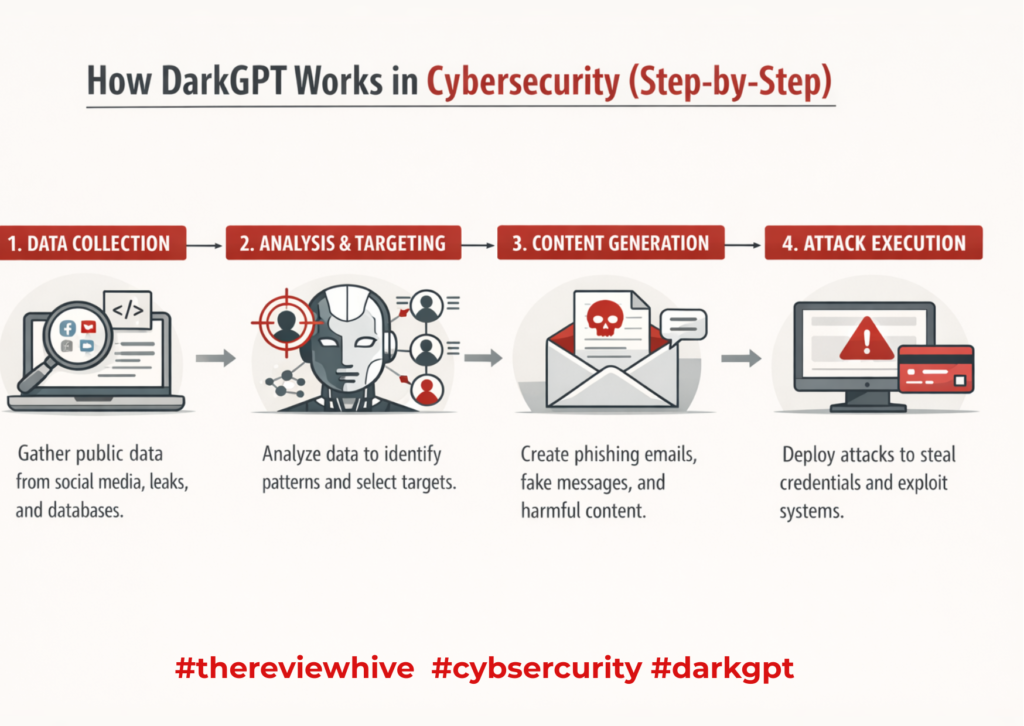

How DarkGPT Works in Cybersecurity (Step-by-Step)

Even without a clearly defined tool, the workflow associated with DarkGPT reflects how modern cyberattacks are evolving.

- Data Collection

Attackers gather leaked data, employee details, and publicly available information. The more context they collect, the more targeted the attack becomes.

- Reconnaissance

AI analyzes this data to identify patterns, relationships, and potential entry points. This reduces manual effort and speeds up decision-making.

- Content Generation

AI generates phishing emails, scripts, and messages that look natural. These messages often reflect internal tone and structure, making them harder to question.

- Attack Execution

Targets receive convincing communication and are prompted to act. The attack does not rely on technical exploits but on human response.

- Post-Access Activity

Attackers log in using real credentials and operate like legitimate users. They move across systems, escalate access, and extract data while staying under the radar. This is why identity-based cyberattacks are increasing. The goal is no longer to break systems, but to gain access through trust.

What Is OSINT and Its Importance in DarkGPT Discussions

OSINT stands for Open Source Intelligence. It refers to collecting and analyzing information from publicly available sources such as social media, company websites, public records, and leaked datasets that are already accessible online. In the context of DarkGPT, OSINT plays a critical role. AI can process large amounts of open-source data quickly, helping identify patterns, relationships, and potential targets. This makes reconnaissance faster and more efficient compared to manual analysis.

For example, an attacker can gather employee details from LinkedIn, combine it with email data from a breach, and use AI to generate targeted communication. This is where OSINT connects directly with identity-based attacks. At the same time, OSINT is not inherently malicious. Security teams use it to detect threats, monitor exposure, and understand risk. The difference lies in how the information is used.

This is why discussions around DarkGPT, a powerful AI driven OSINT tool for leaked database detection are important. The capability itself is not new. What has changed is the speed and scale at which AI can use that information.

Where DarkGPT Is Used

DarkGPT-style behavior appears across several areas. They are listed below

- OSINT(Open Source Intelligence) and Data Analysis – AI processes large datasets quickly, helping attackers identify targets and extract useful insights from publicly available information.

- Phishing and Social Engineering– AI-generated communication is more refined. It aligns with real-world tone and context, making it harder to detect.

- Script Assistance– AI supports code modification and scripting tasks. It helps reduce the effort needed to create or adapt tools.

- Dark Web Discussions-The term appears frequently in underground forums, though many references are not verified. This adds to the ambiguity around its existence.

Real Examples of DarkGPT in Action

Understanding DarkGPT becomes clearer when you move away from theory and look at how similar AI-driven behavior is already being used.

- AI-Generated Phishing Campaigns

In earlier phishing attacks, poor grammar and generic messaging were common red flags. That is no longer the case. AI now generates emails that reflect internal communication styles, correct formatting, and realistic urgency. An employee may receive a message that appears to come from IT support asking for a routine password reset. The language is clean, the timing feels appropriate, and nothing stands out as suspicious. This is where DarkGPT threats phishing malware cybercrime become more relevant. The attack is no longer obvious. It blends into a normal workflow.

- Credential Theft Using Context-Aware Content

Modern attacks are designed to guide users rather than force entry. AI-generated messages often lead users to fake login pages that closely resemble real platforms. For example, a finance employee may receive a request to verify account details before processing a transaction. The email references internal processes and includes urgency. Once credentials are entered, the attacker gains access without exploiting any vulnerability. This shift from breaking systems to logging in reflects the growing impact of identity-based attacks.

- OSINT-Driven Targeted Attacks

Attackers no longer rely on guesswork. AI processes publicly available data to build detailed target profiles. This includes identifying job roles, responsibilities, reporting structures, and recent activity. This aligns with how DarkGPT a powerful AI driven OSINT tool for leaked database detection is discussed. The AI does not create new data. It makes existing data more usable and actionable.

- Social Engineering With AI Assistance

AI can generate structured conversation scripts for phone calls or chat-based scams. These scripts adapt based on user responses, making interactions feel natural. An attacker posing as technical support can guide a user step by step, creating a sense of trust and urgency. This reflects how DarkGPT AI features risks ethical concerns extend beyond systems and into human behavior.

- Tools Like FraudGPT and WormGPT

Tools such as FraudGPT and WormGPT have been mentioned in cybersecurity discussions for their ability to generate phishing content and scam templates without safety restrictions.

They are not necessarily advanced systems, but they demonstrate what happens when safeguards are removed. These examples reinforce the concerns highlighted in DarkGPT pros and cons, where the same capabilities that assist analysis can also be misused.

- Blending Into Normal Activity

The most important shift is not the tool itself, but how the activity appears.

AI-assisted attacks no longer look suspicious. Emails look legitimate. Logins appear valid. Actions follow expected patterns. This connects to how darkgpt operates on the dark web is often described. The goal is not to stand out, but to operate quietly within normal behavior.

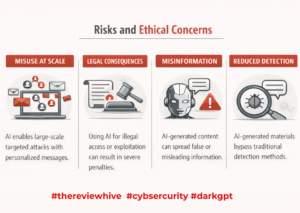

Risks and Ethical Concerns

The risks associated with DarkGPT are not tied to a single tool. They come from how AI is applied in real-world scenarios, especially when safeguards are removed or ignored.

- Misuse at Scale

AI removes the effort required to create targeted attacks. Instead of manually crafting messages, attackers can generate hundreds of personalized emails in minutes. These messages can reference roles, departments, or recent activity, making them more convincing. This scale increases the probability of success. Even a small response rate can lead to multiple compromised accounts.

- Legal Consequences

Using AI for unauthorized access, phishing, or data exploitation is illegal in most jurisdictions. The challenge is that AI can blur intent. A tool may appear neutral, but its application determines legality. Individuals experimenting with unverified AI tools risk exposure to both legal action and secondary threats, such as downloading malicious software disguised as AI platforms.

- Misinformation and Manipulation

Unrestricted AI can generate content that appears credible but is misleading or false. This can be used in scams, impersonation, or manipulation campaigns. Because the content is well-structured and context-aware, it becomes harder for individuals to question its authenticity. This increases the effectiveness of social engineering.

- Reduced Detection

Traditional security systems rely on identifying anomalies such as poor grammar, unusual patterns, or known malicious signatures. AI-generated content does not follow these patterns. Messages appear natural, structured, and aligned with normal communication. This allows attackers to bypass basic detection mechanisms and operate longer without being noticed.

- Ethical Boundary Shift

AI introduces a grey area where the same capability can be used for both defense and offense. The ethical concern lies in how easily these boundaries can be crossed. A tool designed for analysis can be repurposed for targeting. This makes governance and responsible use more important than the technology itself.

Is DarkGPT Legal to Use

Legality depends entirely on usage. Using AI for research or authorized testing may be acceptable. Using it for phishing or unauthorized access is illegal. Many tools claiming to be DarkGPT are unverified, which increases risk for users who attempt to access them.

DarkGPT vs ChatGPT (Quick Comparison)

When comparing AI tools, one of the most common questions is around trust and control. This is where the discussion around DarkGPT vs ChatGPT: Which Should You Trust—and Why becomes relevant.

Mainstream AI tools like ChatGPT operate within defined safety boundaries. They are built to restrict harmful outputs, follow usage policies, and provide responses that align with ethical and legal standards. In contrast, systems described as DarkGPT are often portrayed as having fewer restrictions. This lack of control is what makes them unpredictable. The outputs may not be filtered, which increases the risk of misuse, misinformation, or harmful content generation.

The difference is not capability, but control. Both types of systems can process language and generate responses. What separates them is how they are governed and what safeguards are in place. For users and organizations, this distinction matters. Trust is not just about what the AI can do. It is about how reliably it operates within safe and defined boundaries.

How to Install and Access DarkGPT (Reality Check)

Most DarkGPT installation guides follow a familiar structure but lack verification.

They often reuse unrelated processes and create the illusion of a working system.

There is no trusted, official source for DarkGPT. Users should approach such claims with caution.

Here’s your expanded, deeper version of the section. It adds clarity, context, and practical value while keeping your tone intact.

How Organizations Can Defend Against AI-Driven Threats

The rise of AI-assisted attacks means organizations can no longer rely only on traditional perimeter security. The focus has shifted from protecting systems to protecting access, behavior, and identity. Each control needs to address how attackers actually operate today.

- Strengthen Authentication

Multi-factor authentication is no longer optional. Passwords alone are easy to steal through phishing or infostealers. Adding a second layer, such as an authenticator app or hardware token, reduces the chances of unauthorized access. At the same time, authentication should not be treated as a one-time check. Monitoring login patterns is equally important. A login from a new location, unusual device, or odd time should trigger verification. This helps detect cases where valid credentials are being misused.

- Train Employees

Most AI-driven attacks still rely on human interaction. Employees are often the entry point.

Training should move beyond generic awareness. It should include real examples of modern phishing attempts that use clean language, internal references, and urgency. Employees need to understand that not all threats look suspicious anymore.

Regular simulations and updates help reinforce this. The goal is not just awareness, but the ability to pause and question before acting.

- Adopt Zero Trust

Zero Trust changes how access is handled. Instead of assuming that a user inside the network is safe, every request is verified. This includes checking identity, device health, and context before granting access. Even if an attacker gains valid credentials, they should not be able to move freely across systems. Zero Trust limits lateral movement, which is a key step in modern attacks.

- Monitor Behavior

Attackers using stolen credentials often behave differently from legitimate users. They may access systems they normally wouldn’t, download large volumes of data, or attempt multiple actions in a short time. Behavior monitoring helps detect these patterns. Instead of focusing only on login success or failure, it looks at what happens after access is granted.

This is critical because many attacks today appear legitimate at the login stage.

- Limit Access

Access should be based on role and necessity. Users should only have the permissions required to perform their tasks. If an account is compromised, limited access reduces the damage. It prevents attackers from reaching sensitive systems or escalating privileges easily. Regular reviews of access rights are important. Over time, users often accumulate permissions they no longer need.

AI-driven attacks are effective because they blend into normal activity. They do not rely on obvious signs or system vulnerabilities. They rely on trust, access, and behavior. Defending against them requires a shift in mindset. It is no longer about keeping attackers out. It is about controlling what happens when they get in.

Why DarkGPT Matters in 2026

DarkGPT matters in 2026 not because of a single tool, but because it reflects how AI is changing the mechanics of cyberattacks. AI has reduced the time required to plan and execute attacks. Tasks that once took hours or days, such as writing phishing emails, analyzing targets, or preparing scripts, can now be done in minutes. This allows attackers to operate faster and target more individuals at once. At the same time, the scale of attacks has increased. AI enables attackers to generate large volumes of tailored communication instead of relying on generic messages. This improves success rates because the content feels relevant to the target. The attack is no longer random. It is shaped around the individual.

This shift has also changed the type of attacks being used. Identity-based cyberattacks are becoming more common because they are easier to execute and harder to detect. Instead of exploiting system vulnerabilities, attackers focus on stealing credentials and gaining legitimate access. Once inside, they do not need to behave like attackers. They log in, access systems, and move through environments using valid credentials. This reduces the chances of triggering traditional security alerts, which are often designed to detect external threats.

Another important change is the role of AI in decision-making. Attackers can use AI to analyze which targets are more likely to respond, what type of message to send, and when to send it. This makes attacks more strategic and less dependent on trial and error. The result is a shift from technical intrusion to behavioral manipulation. Systems may remain secure, but users become the entry point. This is why DarkGPT matters. It represents a move toward attacks that rely on trust, context, and access rather than technical weaknesses. Understanding this shift is critical for building defenses that address how attacks actually happen today.

To Sum Up

DarkGPT may not exist as a clearly defined tool, but the behavior it represents is already shaping real-world cyberattacks. AI is no longer just supporting attackers, it is changing how attacks are designed, targeted, and executed. The shift is not about new vulnerabilities. It is about how existing access is being exploited. Attackers are moving away from breaking systems and focusing on gaining trust, stealing credentials, and operating as legitimate users. This makes detection harder and response slower. Traditional security models that focus only on external threats are no longer enough. The real concern is not the name DarkGPT. It is the direction cybersecurity is heading in. Understanding this shift is essential, because the attacks are already happening, and they are becoming harder to recognize.

FAQs on DarkGPT

- Is DarkGPT a real cybersecurity tool

DarkGPT is not a verified standalone tool. It is a concept associated with unrestricted AI use in cybersecurity.

- How does DarkGPT enable phishing attacks

DarkGPT-style workflows use AI to generate realistic, context-aware phishing messages that increase the likelihood of user interaction.

- Are FraudGPT and WormGPT examples of DarkGPT

They reflect similar behavior where AI is used without safeguards to generate phishing or scam content.

- Can DarkGPT be safely downloaded

There is no official or trusted source. Most download claims are unverified and may introduce security risks.

- Why are identity-based cyberattacks linked to DarkGPT

AI reduces the effort needed to create convincing communication, making credential theft easier and more scalable.