AI Handling Dark Web Data: How AI Detects Hidden Cyber Threats Early

Share

AI Handling Dark Web Data: How AI Detects Hidden Cyber Threats Early

AI handling dark web data is becoming a core part of modern cybersecurity. The volume of hidden threat data has grown beyond human scale. Reports estimate that over 24 billion leaked credentials are circulating online, much of it appearing first on dark web forums. At the same time, security teams can manually review less than 10% of available threat data, creating a visibility gap attackers exploit. This is where AI changes the equation. Organizations now use AI to scan hidden communities, track stolen data, and detect early signals of cyberattacks. The goal is simple, identify threats before they surface. But the process is tightly controlled. It depends on filtered data, legal compliance, and careful interpretation rather than open access.

This shift is closely tied to how cybercrime itself is evolving. As explored in 80% of Ransomware Now Uses AI, Here’s What That Means, attackers are already using AI to automate and scale operations. That makes defensive use of AI not just useful, but necessary.

TL;DR

AI handling dark web data helps organizations detect cyber threats before they become visible on the surface web. Instead of direct access, systems rely on controlled data collection and curated sources. Dark web data analysis uses AI techniques like Natural Language Processing to identify patterns, extract indicators, and surface risks. AI in threat intelligence improves speed and scale, but it depends heavily on filtering, legal compliance, and human oversight. Dark web monitoring using AI is effective only when it avoids raw data exposure and focuses on structured, safe datasets.

Featured Snippet

AI handling dark web data uses controlled data collection and AI-driven analysis to detect cyber threats early. Instead of raw access, systems rely on filtered datasets, Natural Language Processing, and human oversight. This approach helps organizations identify risks like leaked credentials and ransomware activity before they become public.

What Counts as Dark Web Data

The dark web consists of hidden services accessed through tools like Tor Browser. These spaces include forums, marketplaces, private chat groups, and leak sites where cybercriminal activity often surfaces early.

The dark web consists of hidden services accessed through tools like Tor Browser. These spaces include forums, marketplaces, private chat groups, and leak sites where cybercriminal activity often surfaces early.

What makes this data valuable is timing and intent. On these platforms, threat actors don’t just share information, they coordinate. Discussions around newly discovered vulnerabilities, the sale of stolen credentials, and early-stage attack planning often appear here before they are detected by traditional security systems.

This creates a gap between signal and response. AI helps close that gap.

Recent cybersecurity reports highlight the scale:

- Over 24 billion leaked credentials are circulating across underground sources

- Around 30% of cyberattacks involve stolen credentials, making them a primary entry point

- Ransomware leak sites often expose victim data before official disclosure, sometimes as part of extortion strategies

This is not random noise. Patterns repeat. Certain forums specialize in specific activities. Threat actors build reputations over time. When processed correctly, this data becomes a source of early intelligence.

This early-stage intelligence is also what powers newer AI-driven models. If you want a deeper look at how these systems are structured, this DarkGPT explained guide breaks down the architecture, risks, and real-world implications.

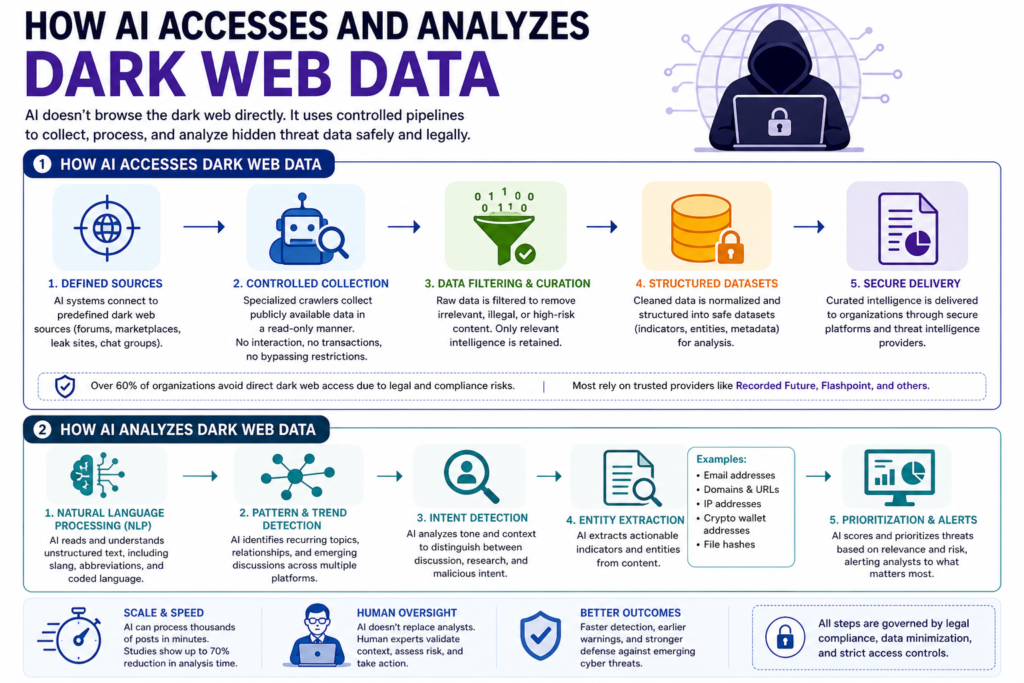

A common misconception is that AI directly browses the dark web. It doesn’t. The process is controlled from the start because the risks are not just technical, but legal and operational.

Organizations use structured data pipelines instead of open access. Specialized crawlers connect to predefined sources and collect data in a read-only manner. They don’t interact with users, make transactions, or attempt to bypass restrictions. The goal is observation, not participation.

Industry reports suggest that over 60% of organizations avoid direct dark web access due to legal and compliance risks. This is why most rely on curated intelligence sources instead of raw data collection.

Many organizations depend on providers like Recorded Future and Flashpoint. These platforms monitor dark web sources, filter content, and deliver structured insights. This reduces exposure while still providing relevant intelligence.

This approach mirrors how modern AI systems are designed. Access is limited, sources are defined, and data is filtered before analysis. Instead of collecting everything, the focus is on collecting what matters.

Once the data is cleaned, AI systems move from collection to interpretation. This is where most of the value comes in.

AI uses techniques from Natural Language Processing to process large volumes of unstructured text. But it’s not just about reading content. It’s about identifying patterns across fragmented and often coded conversations.

On the dark web, information is rarely direct. Threat actors use slang, abbreviations, and indirect references. A single post may not mean much on its own. But when similar discussions appear across multiple forums, patterns begin to form. AI helps connect those signals.

For example, if multiple users mention the same vulnerability across different platforms, even in slightly different terms, AI can group those references together. This helps identify emerging threats before they are widely exploited.

Another layer is intent detection. AI analyzes tone and context to distinguish between discussion and action. There’s a difference between someone discussing a vulnerability and someone actively selling access to it. That distinction helps prioritize threats.

Entity extraction is also a key part of how AI analyzes dark web data. Instead of storing full conversations, systems extract actionable indicators such as:

- Email addresses linked to breaches

- Domains associated with phishing campaigns

- Cryptocurrency wallet addresses used in transactions

These indicators are then fed into security systems for monitoring and response.

What makes this effective is scale. A human analyst might review hundreds of posts a day. AI can process thousands in minutes and highlight only what matters. Studies suggest that AI can reduce analysis time by up to 70%, allowing teams to focus on decisions instead of data sorting.

At the same time, AI does not replace human judgment. It filters, prioritizes, and surfaces insights, but analysts validate and act on those findings. That balance is what makes AI in threat intelligence reliable.

Legal realities of AI cybersecurity dark web systems

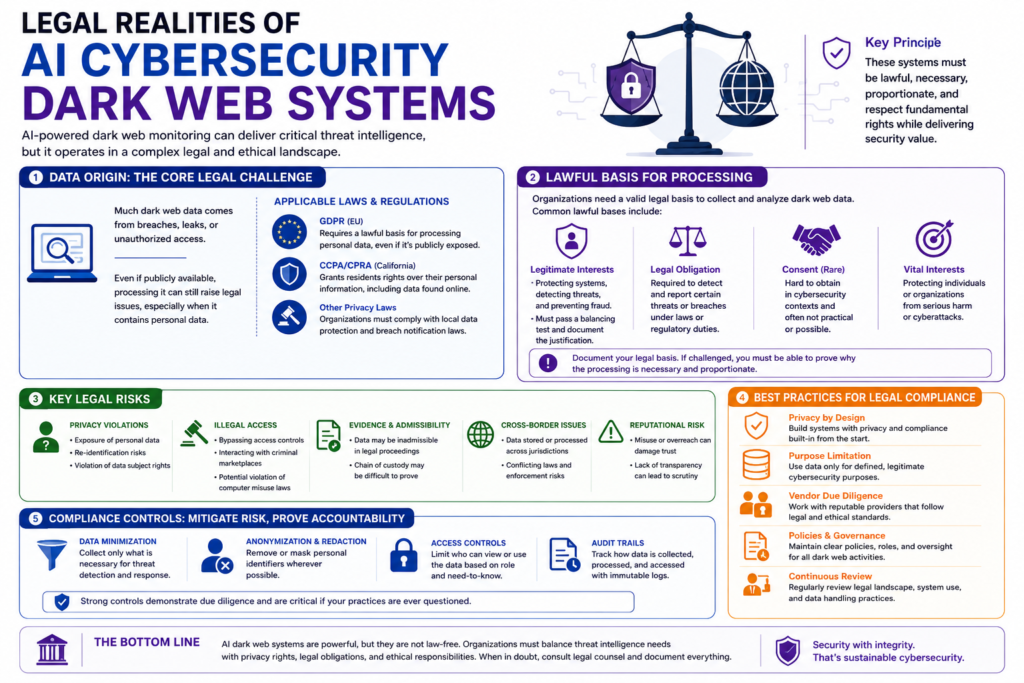

AI cybersecurity dark web systems operate in a space where technical capability meets strict legal boundaries. The challenge is not just collecting and analyzing data but proving that every step is lawful and justified.

AI cybersecurity dark web systems operate in a space where technical capability meets strict legal boundaries. The challenge is not just collecting and analyzing data but proving that every step is lawful and justified.

One of the main issues is data origin. Much of the data found on the dark web comes from breaches, leaks, or unauthorized access. Even if this data is publicly available, using it can still raise legal concerns. Regulations like the General Data Protection Regulation require organizations to have a lawful basis for processing personal data. This applies even when the data has already been exposed elsewhere.

There is also the question of purpose limitation. Organizations must clearly define why they are collecting and using the data. Using dark web data for threat detection may be justified, but using the same data to train general-purpose AI models can cross legal boundaries. The intent behind data use matters.

Another layer is jurisdiction. Dark web data does not respect geographic borders, but laws do. A dataset collected legally in one country may fall under stricter regulations in another. For global organizations, this creates complexity. They must ensure compliance across multiple legal frameworks at the same time.

Access methods also matter. Interacting with certain platforms, even passively, can carry legal risks depending on local laws. This is why most organizations avoid direct engagement and rely on controlled, read-only collection methods or third-party intelligence providers.

To manage these risks, organizations apply strict controls:

- Data minimization, collecting only what is necessary

- Anonymization and redaction, removing personal identifiers wherever possible

- Access controls, limiting who can view or use the data

- Audit trails, tracking how data is collected and processed

Because of these constraints, many organizations avoid training AI models on raw dark web datasets. Instead, they use filtered, structured, or anonymized data. Industry trends suggest that over 70% of enterprises prefer processed datasets to reduce legal exposure.

In practice, legal compliance shapes how these systems are built. It determines what data can be used, how it is handled, and where the limits are. AI handling dark web data is not about accessing everything. It’s about operating within boundaries while still extracting meaningful intelligence.

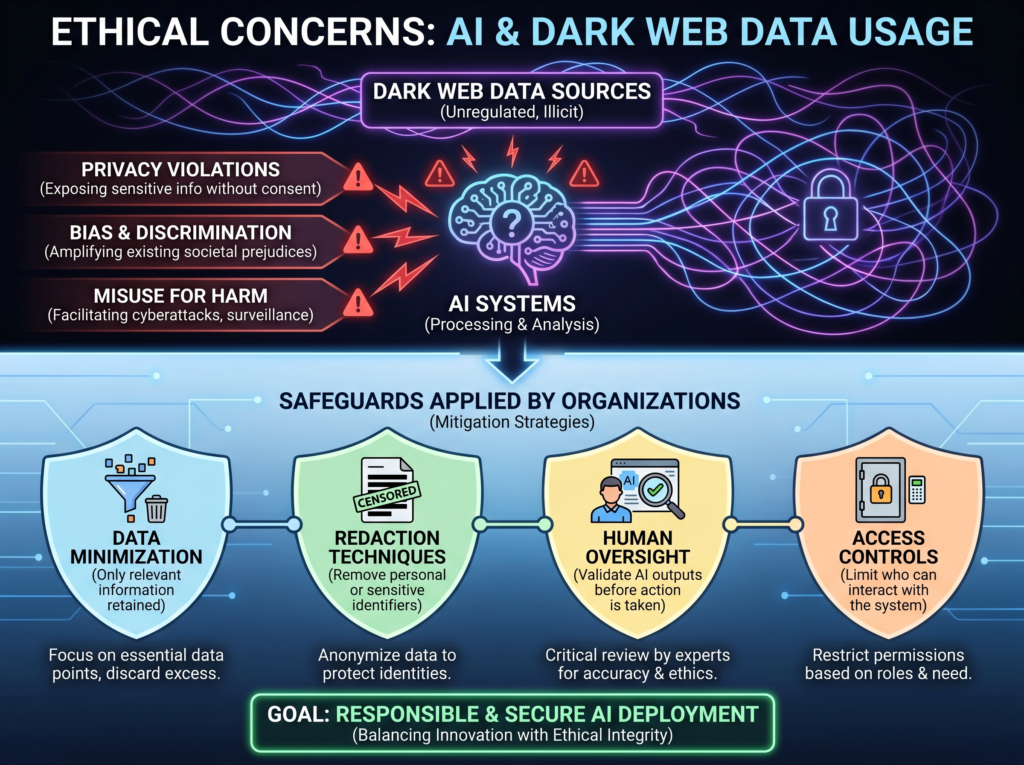

Ethical concerns in AI and dark web data usage

Legal compliance is only one part of the picture. Ethical concerns are just as important, especially when AI systems deal with sensitive and often harmful content from the dark web.

One of the main issues is data origin and consent. Much of the data found on the dark web comes from breaches, leaks, or unauthorized access. Even if this data is publicly available in underground forums, it does not mean it was shared with consent. Using such data in AI systems raises questions about ownership, privacy, and responsible use.

Another concern is exposure to harmful content. Dark web datasets often include toxic language, extremist material, and explicit discussions around illegal activity. If this data is used without proper filtering, it can influence how AI systems behave. Models may reflect biased or unsafe patterns, which can affect outputs and decision-making.

There is also the risk of data memorization and unintended leakage. AI models trained on sensitive datasets can sometimes reproduce parts of that data. In the context of dark web content, this could mean exposing personal information, financial data, or confidential details. This is not just a technical flaw, it’s a serious ethical risk.

Bias is another factor that is often overlooked. Dark web communities are not representative of broader populations. Training AI on such data without context can skew results, leading to inaccurate threat assessments or over-prioritization of certain signals.

To address these concerns, organizations apply multiple safeguards:

- Data minimization, where only relevant information is retained

- Redaction techniques, to remove personal or sensitive identifiers

- Human oversight, to validate AI outputs before action is taken

- Access controls, to limit who can interact with the system

These measures are not optional. They are necessary to ensure that AI systems remain responsible and trustworthy. In practice, ethical AI handling dark web data is about restraint. It’s not about collecting everything, but about knowing what to collect, how to process it, and when to stop.

Real-world impact of AI in threat intelligence

AI in threat intelligence is already making a measurable impact, not just in theory but in how organizations respond to real threats.

The scale of cybercrime has increased sharply in recent years. According to the SonicWall Cyber Threat Report (2024), ransomware attacks have risen by over 70% year-over-year in several regions. At the same time, underground markets continue to grow. Research from Privacy Affairs Dark Web Price Index (2023–2024) estimates that more than 15 million credit card records are sold on dark web marketplaces each year.

This creates a volume problem. Security teams are overwhelmed. Data from IBM Security Cost of a Data Breach Report (2023) suggests that analysts can manually review less than 10% of available threat data.

This is where AI in threat intelligence becomes essential.

AI systems can process large volumes of dark web data in near real time and surface signals that would otherwise go unnoticed. Instead of reviewing thousands of posts manually, analysts receive prioritized alerts based on relevance and risk.

A clear example can be seen in ransomware operations. Groups often announce breaches or leak stolen data on dark web portals before the incident becomes public. AI systems monitoring these sources can detect these posts early and alert security teams, giving organizations a critical response window.

This speed matters because attackers are also using AI. As discussed in 80% of Ransomware Now Uses AI, Here’s What That Means, automation is reducing the time between reconnaissance and execution.

AI helps close that gap. It doesn’t eliminate risk, but it improves visibility. It allows organizations to move from reactive response to early detection.

What makes AI handling dark web data effective

The effectiveness of AI handling dark web data comes down to control. Not just in how data is collected, but in how it is filtered, interpreted, and used.

At a basic level, successful systems follow a structured approach. But in practice, each layer plays a critical role.

Controlled data collection is the starting point. Instead of scanning everything, systems focus on predefined sources. This reduces noise and limits exposure to unnecessary risk.

Strong filtering before analysis makes the data usable. Dark web content is fragmented and often mixed with irrelevant or harmful material. Filtering removes noise and prepares the data for meaningful analysis.

AI combined with human oversight keeps the system grounded. AI identifies patterns, but analysts validate context and decide on action.

A practical example can be seen in financial institutions monitoring credential leaks. AI detects stolen login data on underground forums, flags it, and extracts key indicators. Analysts verify the findings and take action before the data is misused.

When these layers work together, the system becomes efficient and reliable. It surfaces early signals without compromising accuracy.

Understanding how AI analyzes dark web data is essential for building systems that operate safely and deliver real-world value.

To sum up

AI handling dark web data is not about unrestricted access. It’s about extracting useful insights from complex environments.

AI and dark web data strategies work best when they focus on filtered inputs, legal compliance, and human oversight. Dark web data analysis powered by AI improves early threat detection, but only when used responsibly.

Key Points

- AI handling dark web data enables early threat detection

- AI and dark web data require controlled, not open, access

- Dark web data analysis depends on strong filtering layers

- AI in threat intelligence improves speed and accuracy

- Dark web monitoring using AI enhances visibility into hidden risks

- AI cybersecurity dark web systems must follow legal and ethical safeguards

- How AI analyzes dark web data determines reliability

FAQs

What is AI handling dark web data?

AI handling dark web data refers to using artificial intelligence to collect, filter, and analyze hidden online content to detect cyber threats early.

How does AI analyze dark web data?

AI uses Natural Language Processing and pattern detection to identify trends, extract indicators, and highlight risks.

Is dark web monitoring using AI safe?

It is safe when strict controls are in place, including filtered datasets, legal compliance, and human oversight.

Can AI be trained on dark web data?

Most organizations avoid raw data and instead use anonymized or processed datasets.

What is DarkGPT and how is it related?

DarkGPT refers to experimental AI models designed to analyze hidden data sources. In practice, real-world systems use controlled and compliant approaches.